AI Citation Ranking Factors Analysis

Evidence-Based Analysis of 54 Experiments, Patents, and Case Studies. Plus get a free AI Citation checklist.

👋 Subscribe free (it helps me produce research like this) and get the 7-Step AI Citation Checklist with proven tips + tactics on how to win more visibility. Already subscribed? Check your inbox in the next few days.

Everyone is talking about AI citations.

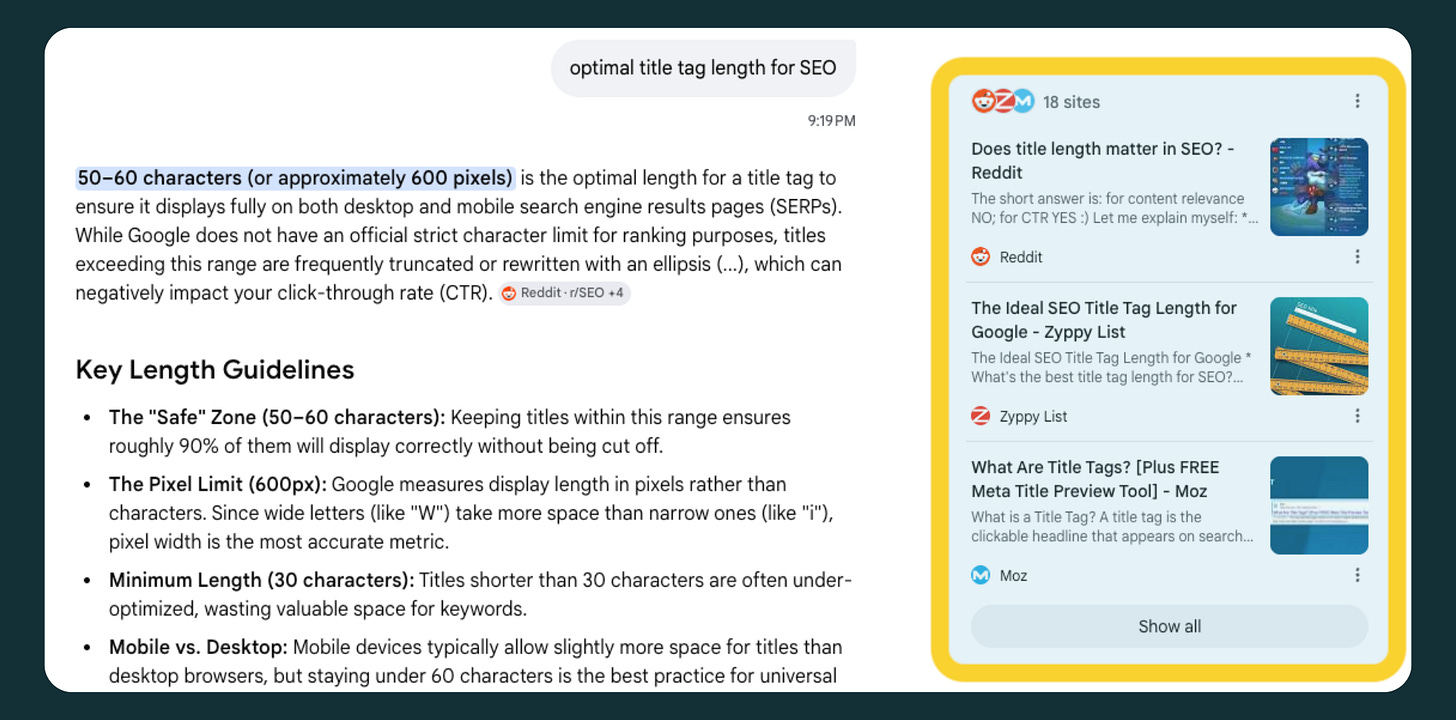

To make sure we’re on the same page, what do we mean by AI citations? While they vary by platform, AI citations are clickable links to sources that AI engines use to support their answers. Like these below.

While there’s no debate that AI answers reduce clicks to the open web, citations can act as a safety valve for publishers. A recent study by Seer Interactive shows that getting cited in Google’s AI overviews results in 120% more organic clicks per impression and a 41% increase in paid clicks compared with when your brand is not cited.

It seems every week we see a solid new AI citation study, framework, or even debate over what works.

But we observe that most marketers aren’t implementing these tactics in their workflows, either because they aren’t sure what’s important or because of information overload.

To address this, I downloaded nearly every published AI citation experiment, study, explainer, and patent published over the past couple of years. These covered various AI engines, including ChatGPT, Gemini, and Perplexity. I then narrowed it down to the 54 most salient and helpful. I categorized the findings from each and cross-referenced them to identify the most similar and often cited observations.

The AI Citation Ranking Factors you’ll see below are based on actual data from the published experiments and studies. The strength of each “factor” is based on:

Repeatability: How many times has each similar finding appeared across multiple studies? Also, the consistency of outcomes—positive or negative—across studies.

Strength of Evidence: E.g., a 50-million-query study carries more weight than a 10-query case study.

Official Support: Whether or not the factor is supported by official docs, technical specs, or patents.

After all this evidence was tabulated, I manually assigned a score based on these criteria, using AI to help fine-tune the numbers.

Before we go any further, I want to acknowledge and thank many of the authors, researchers, and thought leaders who made this research possible. In a world of fake “Top 10” gurus, these are a few (but not all) of the authentic AI search folks whom I rely on to learn more about the AI universe, and they deserve a follow:

Dan Petrovic • Kevin Indig • Metehan Yesilyurt • Oshen Davidson• Lily Ray• David McSweeney • Aleyda Solís • Britney Muller • Dawn Anderson • Micheal King • Andrea Volpini • Rand Fishkin • Ann Smarty • Cyrus Shepard (kidding! But if you want to follow me, there I am ;)

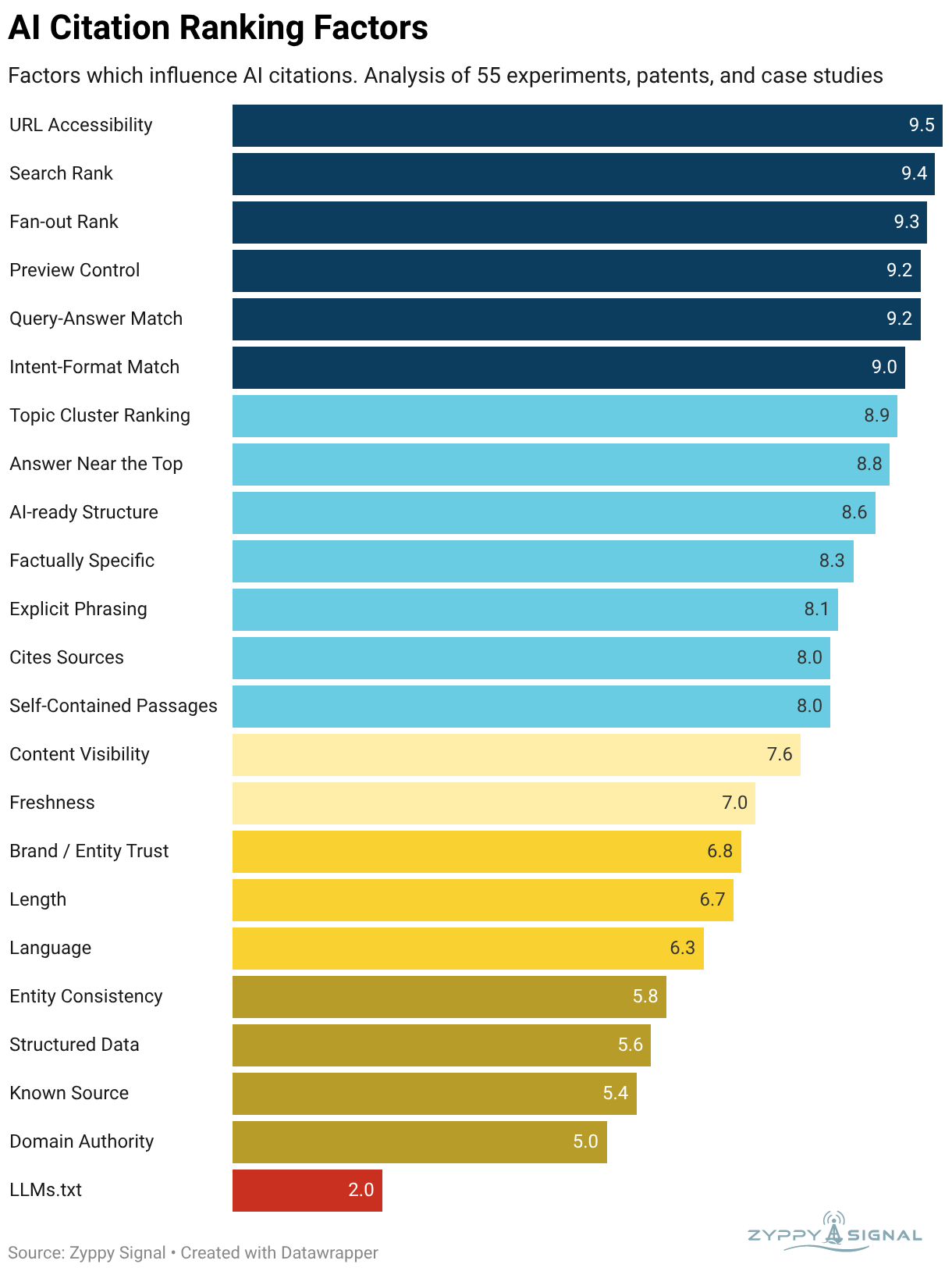

Below are the most evidence-based characteristics of content that earn AI citations. Be sure to read the explanation of each factor beneath the graph, and get your free copy of the AI Citation Checklist, which shows you practical steps to put these factors into practice.

TL;DR - While the evidence suggests that specific practices can boost AI citations, most of the critical factors align with traditional SEO practices. This is important because there’s much debate in the AI / SEO world about whether optimizing for AI visibility requires a different toolset.

In this case: win SEO, win AI citations (most of the time, with extra steps.)

AI Citation Ranking Factor Descriptions

1. URL Accessibility

Score: 9.5 | Definition: Page is available and crawlable during training/grounding

This is basic SEO applied to AI engines. A URL typically needs to be accessible and crawlable, either during training or grounding, for an AI engine to cite it.

That said, this has grown increasingly complex as AI companies add more user agents to crawl the web (OAI-SearchBot, GPTBot, Google-Extended, etc.) and companies like Cloudflare offer AI-scraper-blocking protections. The end result is that it’s easier than ever to exclude pages from AI systems and lower the probability of citations.

2. Search Rank

Score: 9.4 | Definition: How the URL ranks for the exact query

Many, many studies have found a clear relationship between ranking highly in “traditional” search and AI citations. Ahrefs found that 38% of AI Overviews citations come from the top 10 Google results. Go beyond the top 10, and the overlap increases.

ChatGPT is more complex because it doesn’t fully disclose its search sources, but AirOps found a strong relationship between “retrieval rank” and citations from ChatGPT.

3. Fan-out Rank

Score: 9.3 | Definition: How the URL ranks for related fan-out queries

Aside from ranking for the main query, AI engines will perform many “fan-out” searches to supplement and ground their answers. Lots of evidence shows a clear relationship between ranking highly for fan-out queries and earning AI citations.

4. Preview Control

Score: 9.2 | Definition: Preview controls, such as "nosnippet," can influence visibility

More specifically for Google and Bing, preview controls are directives that allow publishers to control how much of a page search engines can show in snippets and on some AI surfaces. Examples include “nosnippet” and “data-nosnippet”. Limiting the visibility of specific text can lower AI visibility.

5. Query-Answer Match

Score: 9.2 | Definition: The page content closely matches the query (primary/fan-out)

Many studies have documented a “semantic closeness” between the AI answer and the cited content. This often means that page titles, subheads, and content closely match both the search query and the AI answer.

6. Intent-Format Match

Score: 9 | Definition: The page type matches the intent of the query, e.g., listicles for "best" queries

AI engines tend to cite articles whose content format is best suited to the query. For example, “best”-type questions (“best probiotics for men”) might prefer a listicle or comparison table, whereas “how-to” queries (“how to build a bird house”) are more likely to reward a step-by-step guide.

This may simply be an artifact of the Search Rank/Fan-out Rank

7. Topic Cluster Ranking

Score: 8.9 | Definition: The degree that the site ranks for multiple queries (primary + fan-out)

This is an interesting one, but easy to understand. The concept is that ranking for multiple related queries increases your chances of being cited at least once. The RRF Top-n Playbook, while technically challenging, was one of my favorite experimental explainers.

8. Answer Near the Top

Score: 8.8 | Definition: Important content placed near the top of the page is more likely to be cited

One thing that becomes immediately clear when reading all of these papers is that AI engines don’t treat all the text on the page equally. Dan Petrovic showed how Google’s Gemini uses a strict retrieval cap per URL, and content near the top of the page is more likely to make the cut. Several other studies support this as well.

9. AI-ready Structure

Score: 8.6 | Definition: Content is formatted so that AI can easily extract and understand information

Following the idea that AI engines typically don’t retrieve your entire page, consider that they break pages into sections before retrieval. If your content isn’t clearly organized, this can make the job more complex.

This doesn’t mean you need to “chunk” your content into bite-sized pieces; simply provide a clear structure with headings, sections, tables, etc. In fact, many studies found a clear relationship between these types of features and AI citations.

10. Factually Specific

Score: 8.3 | Definition: Pages and passages that showcase specific, verifiable facts

Because AI citations are included to support specific claims in the AI answer itself, it seems to help if you mirror that with specific facts that the AI can reference. Statements like “Adults need a lot of protein” aren’t as specifically citable as “Experts recommend 0.8 grams of protein per kilogram of body weight.”

11. Explicit Phrasing

Score: 8.1 | Definition: Specific claims over vague statements

The cousin to “Factually Specificity”, AI engines appear to prefer phrases that are more definitive, without hedging. For example, “Some people prefer magnesium glycinate, while others use citrate or threonate...” is a far weaker phrase than “Magnesium glycinate is the best choice for sleep.”

12. Cites Sources

Score: 8 | Definition: Facts are backed up with referenced sources

Several studies found that facts with clearly cited sources are associated with more frequent AI citations. This makes sense as AI engines are working to generate answers and citations they can justify.

Practically speaking, this doesn’t mean you have to add citations to all of your content, but it would be prudent to “show your work” and demonstrate how you arrived at important conclusions.

13. Self-Contained Passages

Score: 8 | Definition: Important statements can stand alone without additional context

The idea of “Self-Contained Passages” simply means you're expressing key facts or points fully within sentences or blocks of text.

For example, if you say “This ingredient has better evidence,” the AI engine is forced to parse your meaning from other areas of text. What ingredient? What evidence? But if you say “Magnesium glycinate is supported by 137 scientific studies for heart health,” the information is unambiguous and self-contained.

14. Content Visibility

Score: 7.6 | Definition: Important information is in visible HTML text, not hidden

Modern web pages can contain a lot of text that’s not immediately visible, at least not without a lot of JavaScript or by requiring users to click on divs and tabs. We’ve known for a long time that even Google doesn’t seem to rank content as well when it’s not clearly visible on the page, and AI engines appear to share this bias.

15. Freshness

Score: 7 | Definition: How current the information is

Freshness is a known SEO ranking factor, and several studies have found a correlation between document freshness and AI citations. Like traditional search, freshness seems to vary by query. A question about a recent sports match will require more up-to-date information than a question about British history.

16. Brand / Entity Trust

Score: 6.8 | Definition: How much the AI engine knows about and trusts the brand/website

Increasingly, it appears AI engines seek out more credible sources of information. This means that what they already know about you may influence their level of trust or if they seek you out. For a health-related query, an AI engine is more likely to trust the Mayo Clinic than an anonymous health blog. Google works this way too, so there’s probably some overlap.

17. Length

Score: 6.7 | Definition: The length, in word count, of the content

Many, many studies looked at how content length correlated with AI citations. While the majority found that longer content tended to perform better, the evidence was inconsistent. Several researchers pointed out that longer content also reduced the likelihood that AI engines would retrieve all of your content.

18. Language

Score: 6.3 | Definition: The language of the content

The studies showed a clear bias towards the language—and sometimes location—of the question. A question asked in French by someone in France is more likely to use French citations.

19. Entity Consistency

Score: 5.8 | Definition: The use of consistent names for products, brands, people, etc.

Entity consistency simply refers to using consistent naming conventions for brands, people, products, etc.

For example, I could write “Zyppy makes SEO software which helps marketers rank.” That’s a much clearer sentence than “Zyppy makes SEO software. My software helps marketers rank.” The first version is clearer for both search engines and users.

20. Structured Data

Score: 5.6 | Definition: Page contains schema to identify entities and support content

Oh boy. There are few debates more heated in the SEO/AI space than the use of structured data for AI optimization. While it’s true that LLMs don’t typically ingest schema as part of their training, there’s limited evidence that they can at least see schema when searching (though they don’t use it in a schema-like way).

Regardless, we’re not here to debate that.

We’re here because practically every study that looks at schema and AI citations finds a positive relationship. The effect is typically small, but it’s amazingly consistent across studies.

21. Known Source

Score: 5.4 | Definition: The URL is already known to the AI engine via its training data

Sometimes (quite often, in fact) an AI will cite a URL simply because it knows about it from its existing training data. More typical of ChatGPT and Perplexity, this can bypass the usual grounding/search phase, leading to citations that no longer exist.

22. Domain Authority

Score: 5 | Definition: A link-based measure of website popularity

Several studies looked at the relationship between Domain Authority and AI citations. While many found a relationship, it was often weak.

23. LLMs.txt

Score: 2 | Definition: The website hosts an LLMs.txt file for AI engines

To be fair, I’m not certain many of these studies even considered the influence of LLMs.txt files. That said, we’re unable to find any credible evidence or experiments showing LLMs.txt files influence AI citations in any way.

The Steps To Earn More AI Citations

Ultimately, you don’t need an entirely new SEO playbook to earn AI citations, but you may want to tweak a few practices and execute a few other SEO strategies even better.

The overlap between traditional SEO and AI citation signals is significant. Additionally, many of these factors, when properly implemented, can improve the experience of human users on your page, which should be priority #1.

If we had to sum them up, they might be: Relevance, Trust, Topical Authority, & Extractability - all signals that should align with current SEO thinking. A few of the technical details shift, but we can still focus on creating great experiences.

Happy citation earning!